TL;DR — What You Need to Know

- AI adoption among competitive intelligence teams grew 76% year-over-year, with 60% of CI teams now using AI daily (Crayon State of CI 2025)

- AI changes CI across seven areas, from automated competitor monitoring to predictive intelligence, cutting manual research time by 80–90%

- The AI CI Tech Stack has four layers: Collection → Processing → Analysis → Distribution. You can start for $0 using ChatGPT or Claude and scale as you prove value.

- Our AI CI Maturity Model maps five levels from Manual to AI-Native. Most mid-market teams sit at Level 2 (AI-Assisted). The practical goal for 2026 is reaching Level 3–4.

- Start with a 30-day pilot tracking 2–3 competitors before investing in platforms. Most mid-market teams can run an effective AI-powered CI program for under $200/month.

- AI handles volume and pattern recognition. Strategic judgment and relationship intelligence remain human territory.

The top result for “AI competitive intelligence” on Google is a Reddit thread. That tells you everything about where the industry stands: everyone’s asking how to use AI for competitive intelligence, and nobody has published a thorough answer.

It’s not for lack of interest. AI adoption among CI teams grew 76% year-over-year in 2025 (Crayon State of CI). Yet most teams are still copy-pasting competitor URLs into ChatGPT and hoping for the best. There’s a wide gap between what AI-powered competitive intelligence can deliver and what teams are actually doing with it.

That gap exists partly because almost everything published on this topic comes from vendors selling CI software. Twelve of the top 19 Google results for this keyword are from companies with a product to push. Independent, vendor-neutral guidance on how to actually build an AI-powered CI program — with honest coverage of what works, what doesn’t, and what AI genuinely can’t do — is nearly nonexistent.

This guide covers:

- What AI actually changes in the CI workflow (and what it doesn’t)

- A 4-layer AI CI tech stack with specific tools at every budget level

- An original AI CI maturity model to assess where your team stands

- A 6-step implementation plan for mid-market teams (50–500 employees)

- An honest comparison of DIY vs. platform vs. hybrid approaches

- What AI can’t do, the limitations that vendor articles skip

No product to sell. No platform to pitch. Just the practitioner’s guide to AI competitive intelligence in 2026.

What Is AI Competitive Intelligence?

AI competitive intelligence is the use of artificial intelligence — including large language models, natural language processing, and machine learning — to automate and accelerate the gathering, analysis, and distribution of competitive intelligence. It applies AI across the full CI workflow: from monitoring hundreds of competitor sources simultaneously to generating battlecard updates in minutes instead of weeks.

AI-powered competitive intelligence doesn’t replace human judgment. It’s an augmentation layer that handles the volume and pattern-recognition tasks that overwhelm manual CI processes. Your CI team still decides what matters strategically. AI makes sure they don’t miss the signals buried in noise.

“The persistent problem with CI platforms, even the good ones with lots of integrations, has always been that they generate a huge amount of data from all their data sources, but making sense of it was very tough,” says Massimo Chieruzzi, Chief AI Officer at a European marketplace. “With AI, you can now get exact briefings and immediately identify what’s meaningful and what isn’t.”

Consider something as seemingly trivial as competitor website page change detection. Before AI, it was extremely hard to determine whether an HTML change was just a CMS update or an actual meaningful content shift. As Chieruzzi puts it: “It was easy to say ‘this page changed 5%,’ but determining whether it was a meaningful 1% change or a completely unmeaningful 90% change was very tough. With AI, that distinction becomes vastly easier.”

The shift happened fast. Before 2020, competitive intelligence meant manual Google searches, quarterly pricing audits, and spreadsheets that were outdated before they were finished. Between 2020 and 2023, purpose-built CI tools like Crayon and Klue introduced automated monitoring. From 2023 onward, large language models turned every CI practitioner into someone who could analyze and generate deliverables at a pace that was previously impossible.

60% of CI teams now use AI daily, up from 48% in 2024 — a 25% jump in a single year (Crayon State of CI 2025). Meanwhile, 78% of organizations use AI in at least one business function (McKinsey, 2024), and Gartner projects that 40% of technology and service providers will use commercial competitive intelligence tools by 2026. The question isn’t whether AI belongs in your competitive intelligence program. It’s how far behind you are if you haven’t started.

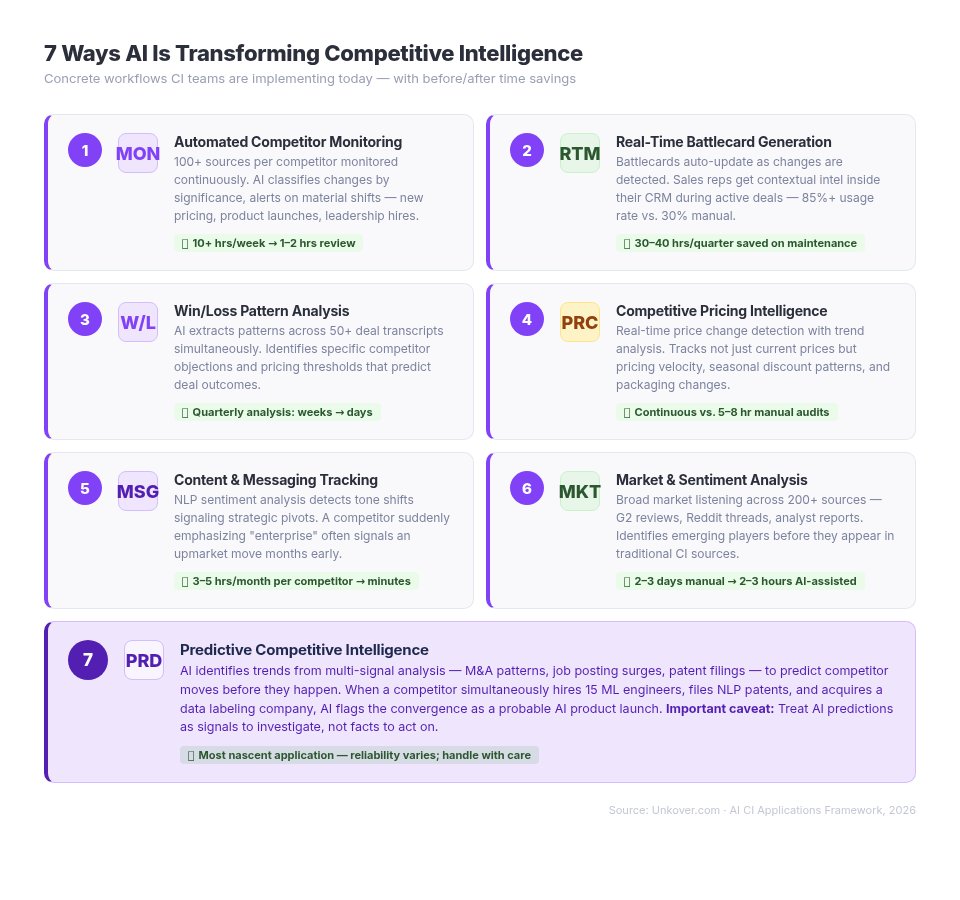

7 Ways AI Is Transforming Competitive Intelligence

These are workflows CI teams are implementing today, with concrete “before and after” comparisons for each.

1. Automated Competitor Monitoring at Scale

AI monitors hundreds of sources (websites, press releases, job boards, patent filings, social media, app store reviews) and surfaces material changes without anyone opening a browser tab.

Before AI: Analysts manually check 5–10 sources per competitor weekly. One person can realistically track 3–5 competitors across 15–20 sources. Strategic moves get missed because nobody checked the right page on the right day.

After AI: 100+ sources per competitor monitored continuously. AI classifies changes by significance, filters noise, and alerts your team only when something materially shifts: a new pricing page, a leadership hire, a product launch announcement.

Time savings: What took 10+ hours per week now takes 1–2 hours of review.

Tools: Crayon, Klue, Visualping + LLM summarization, custom RSS + AI pipelines, Google Alerts (free baseline).

2. Real-Time Battlecard Generation and Updates

AI generates and updates competitive battlecards from collected intelligence. Pricing changes, new features, messaging shifts — all reflected automatically instead of waiting for the next quarterly update cycle.

Before AI: Battlecards updated quarterly if someone remembered. Sales reps stopped trusting them because the data was stale. Battlecard usage in organizations running manual processes sits below 30%.

After AI: Battlecards update as changes are detected. Reps get contextual competitive intelligence surfaced inside their CRM during active deals. Organizations with automated battlecard delivery see usage rates jump to 85%+ (Arise GTM).

Time savings: Product marketing teams save 30–40 hours per quarter on battlecard maintenance alone.

Tools: Klue Compete Agent, Crayon AI Toolkit, DIY with Claude or GPT + structured prompt templates.

3. Win/Loss Pattern Analysis

AI analyzes call transcripts from Gong, Chorus, or AirCall and interview data to identify patterns across dozens — even hundreds — of deals simultaneously. It catches correlations that humans miss in manual review.

Before AI: Manual review of 5–10 deal transcripts per quarter. Patterns emerge slowly and subjectively. CRM “closed lost” reasons are notoriously unreliable: 85% of closed-lost CRM data is inaccurate (Clozd).

After AI: Pattern extraction across 50+ deals in hours. AI identifies specific competitor objections that correlate with losses, pricing thresholds that predict deal outcomes, and messaging patterns that consistently win.

Time savings: Quarterly win/loss analysis compressed from weeks to days.

For a detailed implementation framework, see our win/loss analysis guide.

4. Competitive Pricing Intelligence

AI monitors competitor pricing pages, packaging changes, discount patterns, and freemium-to-paid transitions. Dynamic pricing environments that were impossible to track manually become transparent.

Before AI: Quarterly manual pricing audits. By the time your spreadsheet was updated, competitors had already changed again.

After AI: Real-time price change detection with trend analysis. AI tracks not just current prices but pricing velocity: how often a competitor changes pricing, in which direction, and whether discounts follow seasonal patterns.

Time savings: Continuous monitoring replaces manual audits that consumed 5–8 hours per cycle.

5. Content and Messaging Tracking

AI analyzes competitor content strategy at a granularity that manual monitoring can’t match: blog frequency, topic shifts, messaging evolution, positioning changes, ad copy variations. Whether you need a competitive analysis AI tool for systematic tracking or just want to stop relying on ad-hoc observations, this is where AI makes the biggest day-to-day difference.

Before AI: Ad-hoc content reviews. Someone on the team occasionally checks a competitor’s blog and reports back anecdotally.

After AI: Systematic tracking with change alerts. NLP sentiment analysis detects tone shifts that signal strategic pivots. A competitor suddenly emphasizing “enterprise” over “startup-friendly” often signals an upmarket move months before a formal announcement.

Time savings: From sporadic check-ins consuming 3–5 hours per competitor per month to automated weekly digests reviewed in minutes.

6. Market and Sentiment Analysis

AI processes large volumes of unstructured data (analyst reports, G2 and Capterra reviews, social media discussions, Reddit threads, forum posts) to extract competitive sentiment and identify emerging players before they appear in traditional CI sources.

Before AI: Limited to manual review of key publications and industry reports. Review sites checked occasionally. Emerging competitors discovered only after they started winning deals.

After AI: Broad market listening across hundreds of sources. AI identifies sentiment trends. If a competitor’s G2 reviews shift from praising “ease of use” to complaining about “scaling issues,” that’s intelligence your sales team can use immediately.

Time savings: Comprehensive market analysis covering 200+ sources compressed from 2–3 days of manual work to 2–3 hours of AI-assisted review.

7. Predictive Competitive Intelligence

Machine learning competitive intelligence takes AI-powered CI to its most forward-looking application: predicting competitor moves before they happen. AI identifies trends from historical data — including M&A activity signals, market entry patterns, product launch timelines inferred from job postings and patent filings, and hiring surges that indicate new initiatives.

Before AI: Predictions based on gut instinct and informal pattern matching. Accuracy was inconsistent and unscalable.

After AI: Data-driven predictions based on multi-signal analysis. When a competitor simultaneously hires 15 ML engineers, files three NLP-related patents, and acquires a small data labeling company, AI flags the convergence as a probable AI product launch.

Important caveat: Predictive CI is the earliest of these seven applications. Reliability varies significantly. Treat AI predictions as signals to investigate, not facts to act on. The real value is in surfacing patterns that deserve human attention, not in replacing strategic judgment.

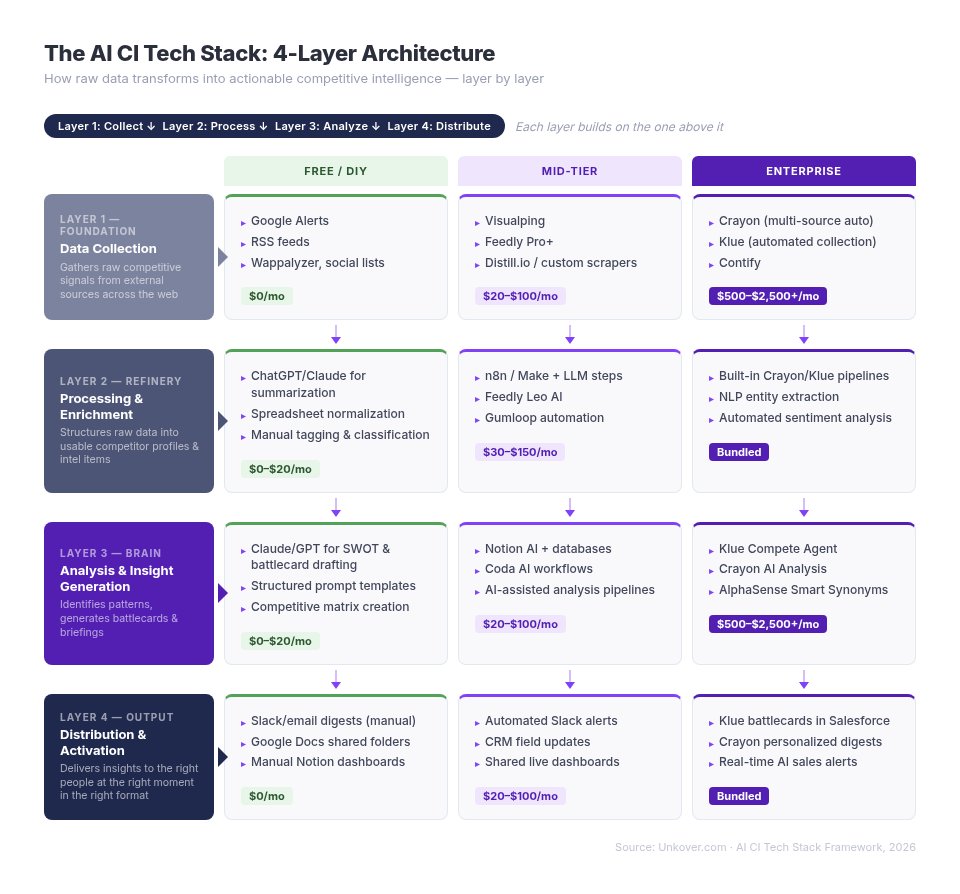

The AI CI Tech Stack: What You Actually Need

Most articles about AI competitive intelligence list tools without showing how they connect. Effective competitive intelligence automation requires more than buying software. It requires a layered architecture where each component builds on the one below it. Here’s the four-layer stack that actually works.

Layer 1 — Data Collection

What it does: Gathers raw competitive signals from external sources across the web.

| Budget Tier | Tools | Monthly Cost |

|---|---|---|

| Free/DIY | Google Alerts, RSS feeds, social media lists, Wappalyzer, manual monitoring | $0 |

| Mid-Tier | Visualping, Feedly Pro+, Distill.io, custom web scrapers | $20–$100 |

| Enterprise | Crayon, Klue, Contify (automated multi-source collection) | $500–$2,500+ |

AI’s role at this layer: LLMs filter signal from noise, classify incoming information by competitor and topic, and prioritize what deserves human attention. Without AI filtering, automated collection generates more noise than insight.

Layer 2 — Processing and Enrichment

What it does: Cleans, structures, and enriches raw data into usable intelligence. Raw web scrapes become structured competitor profiles. Unformatted press releases become categorized intelligence items.

| Budget Tier | Tools | Monthly Cost |

|---|---|---|

| Free/DIY | ChatGPT/Claude for summarization and extraction, spreadsheet normalization | $0–$20 |

| Mid-Tier | n8n/Make workflows with LLM steps, Feedly Leo AI, Gumloop | $30–$150 |

| Enterprise | Built into Crayon/Klue/Contify pipelines | Bundled |

AI’s role at this layer: NLP extracts entities, classifies topics, identifies sentiment, and detects material changes versus routine noise. A competitor updating their copyright footer isn’t intelligence. A competitor rewriting their homepage value proposition is. AI learns the difference.

Layer 3 — Analysis and Insight Generation

What it does: Identifies patterns, generates insights, and creates deliverables: battlecards, SWOT analyses, competitive matrices, executive briefings.

| Budget Tier | Tools | Monthly Cost |

|---|---|---|

| Free/DIY | Claude/GPT with structured prompts for SWOT generation, competitive matrix creation, battlecard drafting | $0–$20 |

| Mid-Tier | AI-assisted analysis workflows (Notion AI + databases, Coda AI) | $20–$100 |

| Enterprise | Klue Compete Agent, Crayon AI Analysis, AlphaSense Smart Synonyms | $500–$2,500+ |

AI’s role at this layer: Pattern recognition across large datasets, automated first-draft deliverable generation, and framework application (SWOT, Porter’s Five Forces, competitive positioning maps). The important word is “first draft.” Human analysts refine the output and add the strategic judgment that AI can’t provide.

Layer 4 — Distribution and Activation

What it does: Delivers competitive insights to the right people at the right moment. Intelligence locked in a shared drive is worthless. Distribution is where CI creates business impact.

| Budget Tier | Tools | Monthly Cost |

|---|---|---|

| Free/DIY | Slack/email digests (manual), Google Docs shared folders | $0 |

| Mid-Tier | Automated Slack alerts, CRM field updates, shared dashboards | $20–$100 |

| Enterprise | Klue battlecard distribution in Salesforce, Crayon email digests, real-time sales alerts | Bundled |

AI’s role at this layer: Context-aware delivery, surfacing relevant competitive intelligence during active deals based on which competitor is involved. Personalized digest generation that sends different intelligence to different roles. One enterprise deployment saw 850+ competitive questions asked via an internal AI chat assistant in 30 days, with Crayon-powered answers rated 2.8x more helpful than generic AI output.

The bottom line: You don’t need all four layers running on day one. Start with Layers 1 and 3 (basic collection and AI-powered analysis) and expand as you prove value. A functional AI-powered CI stack can cost as little as $0–$50/month. For a complete breakdown of competitive intelligence tools at every price point, see our 2026 roundup.

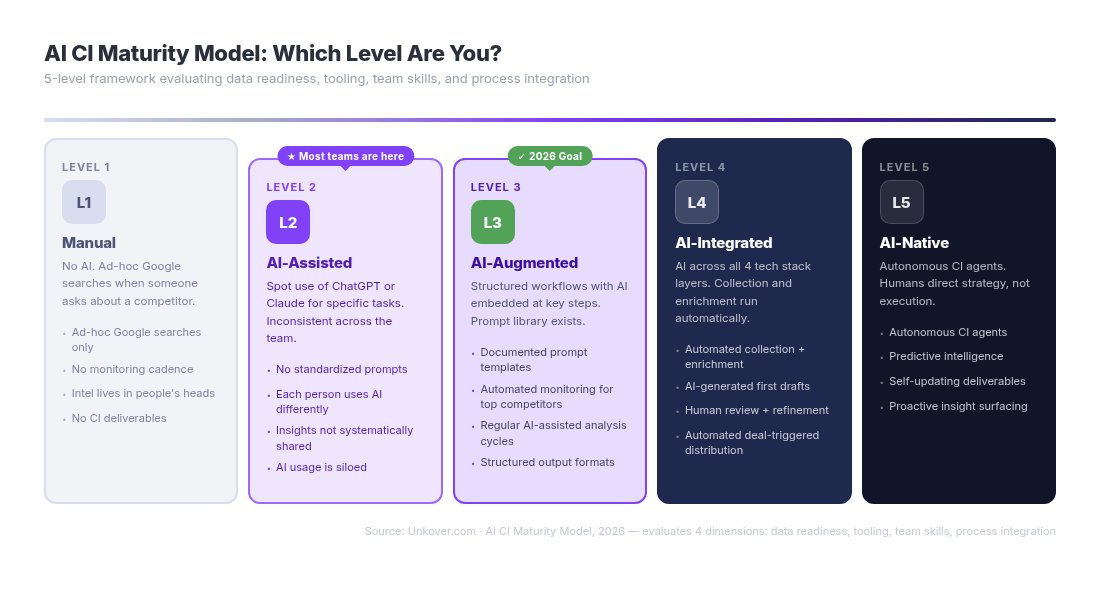

The AI CI Maturity Model: Where Does Your Team Stand?

Where you are on this model determines where to start. We developed this five-level framework because the only existing model (Arise GTM) focuses narrowly on automation. Our model evaluates four dimensions: data readiness, tooling, team skills, and process integration.

Level 1 — Manual (No AI)

CI done entirely through manual research, spreadsheets, and tribal knowledge. Someone Googles competitor names when a deal comes up. There’s no structured process.

Characteristics: Ad-hoc Google searches, no monitoring cadence, intelligence lives in people’s heads, no CI deliverables beyond occasional slides.

Common at: Early-stage startups, teams with no dedicated CI function.

AI readiness: Low. Start with Level 2 quick wins: have one person use ChatGPT/Claude to analyze a competitor’s pricing page or summarize their latest blog posts.

Level 2 — AI-Assisted (Spot Use)

Individual team members use ChatGPT or Claude for specific CI tasks: summarizing competitor reports, drafting battlecard bullet points, analyzing a competitor’s messaging page. Usage is ad-hoc and inconsistent.

Characteristics: No standardized prompts or workflows. Each person uses AI differently (and with varying effectiveness). Insights aren’t shared systematically. AI usage is siloed.

Common at: Most mid-market SaaS teams today. This is where the majority of teams reading this article sit.

Next step: Standardize your prompts. Create a shared prompt library for common CI tasks. Establish a regular analysis cadence (weekly competitor review using consistent AI workflows).

Level 3 — AI-Augmented (Structured Workflows)

The CI team has defined AI-powered workflows for core tasks. Monitoring, analysis, and battlecard updates follow structured processes with AI embedded at key steps. There’s a prompt library. There’s a cadence.

Characteristics: Documented prompt templates, automated monitoring for top competitors, regular AI-assisted analysis cycles, structured output formats for deliverables.

Common at: Mature mid-market teams and early-stage enterprise CI programs.

Next step: Integrate AI across the full pipeline. Connect your collection layer to your analysis layer to your distribution layer so intelligence flows without manual handoffs.

Level 4 — AI-Integrated (Full Pipeline)

AI is embedded across all four tech stack layers. Collection runs automatically. Processing happens without manual intervention. Analysis generates first-draft deliverables. Distribution is targeted and context-aware. Human analysts focus on strategic judgment and exception handling.

Characteristics: Automated collection and enrichment, AI-generated first drafts of all CI deliverables, human review and refinement workflows, automated distribution triggered by deal stage or competitive mentions.

Common at: Well-resourced enterprise CI teams with dedicated headcount.

Next step: Move toward predictive capabilities and explore AI agents that surface intelligence on their own rather than waiting to be queried.

Level 5 — AI-Native (Autonomous CI)

AI agents autonomously monitor, analyze, and deliver competitive intelligence. Human oversight shifts from “doing CI” to “directing CI strategy.” The system identifies intelligence gaps, suggests new sources to monitor, and surfaces insights before anyone asks.

Characteristics: Autonomous CI agents, predictive intelligence capabilities, self-updating deliverables, automatic insight surfacing, multi-agent CI systems.

Current reality: Very few teams have reached this level. Crayon’s MCP (Model Context Protocol) server, launched in September 2025 as the first CI-specific MCP server, is an early signal of this direction. AI agents that build competitive dashboards and deliver intelligence through conversational interfaces are emerging but still early.

Important: Level 5 is aspirational for most teams. The practical goal for 2026 is reaching Level 3 or Level 4. Don’t let the promise of autonomous CI prevent you from implementing what works today.

Quick Self-Assessment: What Level Are You?

Answer these six questions to identify your current maturity level:

- Do you have any automated competitor monitoring (even Google Alerts)? If no → Level 1.

- Does anyone on your team use AI tools (ChatGPT, Claude) for CI tasks? If yes but inconsistently → Level 2.

- Do you have documented AI workflows and prompt templates for CI? If yes → Level 3.

- Does intelligence flow automatically from collection through to distribution without manual handoffs? If yes → Level 4.

- Do AI agents surface competitive intelligence without human initiation? If yes → Level 5.

- When was the last time your battlecards were updated? If >90 days → you’re likely at Level 1–2 regardless of tooling.

How to Build an AI-Powered CI Program: 6 Steps

This implementation guide targets mid-market teams at companies with 50–500 employees. You don’t need a dedicated CI analyst or an enterprise budget. You need a structured approach and 30 days to prove the concept.

Step 1 — Audit Your Current CI Workflow

Before adding AI, document what you already do. Map your existing competitive intelligence process:

- What competitors do you track? List them. If the answer is “it depends” or “whoever comes up in deals,” you need to formalize this first. Our guide to types of competitors covers direct, indirect, aspirational, and perceived competitors. You should track all four categories.

- What sources do you monitor? Websites, pricing pages, job boards, review sites, social media, press releases?

- How often? Weekly? Monthly? “When someone remembers”?

- What deliverables do you produce? Battlecards? Competitive briefs? Nothing?

- Where are your biggest time sinks? The tasks where you spend the most hours for the least insight.

Use the maturity model above to assess your starting point. Most teams discover they’re at Level 1 or Level 2, which is fine. That’s where the highest-ROI improvements live.

Step 2 — Pick Your First AI Use Case

Don’t try to overhaul everything at once. Start with the single use case that offers the highest return on your time investment.

Our recommendation for most teams: Start with one of these two:

- Automated competitor monitoring (Layer 1), if your biggest pain is missing competitor moves because nobody checked the right source at the right time.

- AI-assisted battlecard drafting (Layer 3), if your sales team is flying blind in competitive deals because your battlecards are stale or nonexistent.

Selection criteria: Choose the use case where you currently spend the most time on repetitive tasks with clear inputs and outputs. AI excels at volume and speed. Start where those matter most.

Step 3 — Build or Buy: Choose Your Approach

Three paths exist, each suited to different team profiles:

- Option A — DIY with general AI tools: Use ChatGPT/Claude directly for analysis. Best for teams under 20 people with limited budgets who want to test AI-CI fit before committing.

- Option B — Dedicated CI platform: Purchase Klue, Crayon, or Kompyte. Best for teams with a proven CI function, 50+ sales reps, and executive sponsorship for the investment.

- Option C — Hybrid: Combine monitoring tools (Visualping, Feedly) with general AI (Claude/GPT) for analysis and Slack for distribution. Best for mid-market teams who want structured AI-CI without enterprise pricing.

We cover each option in detail in the next section. Decision factors include team size, budget, technical capability, and your current maturity level.

Step 4 — Start with a 30-Day Pilot

Commit to 30 days. Pick 2–3 competitors to track. Set up your chosen AI tooling alongside your existing manual process.

During the pilot, measure:

- Time saved: How many hours per week does AI save versus your manual process?

- Signals caught: Did AI surface competitive moves you would have missed manually?

- Output quality: Are AI-generated battlecard drafts or analysis summaries useful, or do they need heavy editing?

- Coverage expansion: Can you now monitor competitors or sources that were previously impossible to track?

Running AI alongside your manual process (not replacing it) gives you a direct comparison. If AI catches three pricing changes you missed manually in week two, you have your proof of concept.

Step 5 — Standardize and Scale

Once the pilot proves value, lock in what works:

- Document everything: Create prompt templates, workflow documentation, tool configurations, and training materials.

- Train the team: Share what you’ve learned. Distribute AI CI workflows across product marketing, sales, and strategy.

- Expand coverage: Add more competitors to your monitoring. Add more sources. Layer in additional use cases from the seven applications above.

- Build your prompt library: The difference between Level 2 and Level 3 on the maturity model is standardized, repeatable AI workflows. A shared prompt library is the foundation.

Step 6 — Measure ROI and Iterate

Track metrics that connect AI CI to business outcomes:

- Time-to-insight reduction: How much faster do competitive intelligence deliverables reach stakeholders?

- Coverage expansion: Competitors monitored × sources tracked (before and after)

- Deliverable quality: Sales team satisfaction scores on battlecard usefulness

- Pipeline impact: Win rate changes in competitive deals, deal velocity improvements

- Cost efficiency: Hours saved × loaded cost per hour

Industry benchmarks suggest 10–20% ROI from AI-powered CI programs (Competitive Intelligence Alliance). Organizations that systematically measure CI ROI see 23% higher revenue growth and 18% better profit margins (SCIP). The competitive intelligence tools market itself is growing at 19.96% CAGR, reaching a projected $1.46 billion by 2030 (Mordor Intelligence), a signal that more companies are proving the investment pays off.

Run quarterly reviews: what’s working, what’s not, where to invest next. AI-powered CI isn’t a “set and forget” system. It’s a capability you develop over time.

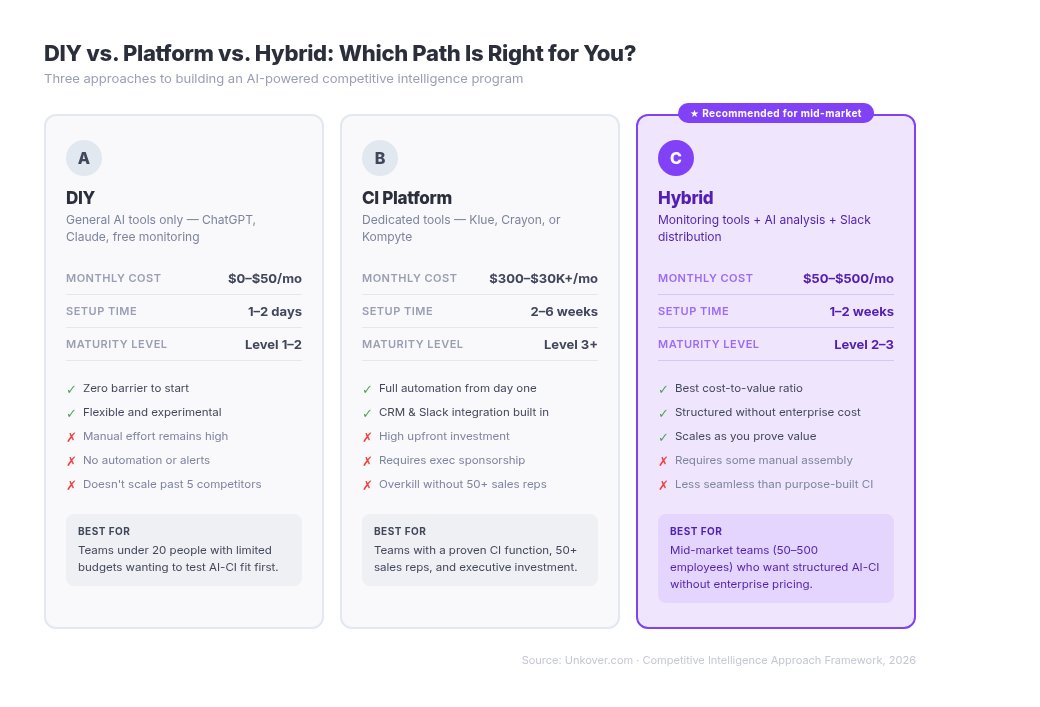

DIY vs. Platform vs. Hybrid: Choosing Your AI CI Approach

This is the honest comparison that no vendor article will give you. Every CI platform company steers you toward buying their software. We don’t sell CI tools. Here’s what actually makes sense for different team profiles.

| Option A: DIY | Option B: CI Platform | Option C: Hybrid | |

|---|---|---|---|

| Monthly cost | $0–$50 | $300–$30,000+ | $50–$500 |

| Setup time | 1–2 days | 2–6 weeks | 1–2 weeks |

| Best for | Teams <20 people, testing AI-CI fit | Dedicated CI/PMM function, 50+ reps | Mid-market teams (50–500 employees) |

| Automation level | Low (manual workflows) | High (end-to-end) | Medium (selective automation) |

| AI flexibility | High (use any model) | Low (vendor’s AI only) | High (mix and match) |

| Scalability | Limited | High | Moderate |

| Vendor lock-in risk | None | High | Low |

| Best maturity level | Level 1–2 | Level 3+ | Level 2–3 |

Option A — DIY (ChatGPT/Claude + Manual Workflows)

Use general-purpose AI tools directly for AI competitor analysis. Copy a competitor’s pricing page into Claude and ask it to compare pricing structures. Feed earnings call transcripts into ChatGPT and ask it to extract competitive positioning statements.

Pros: Flexible, cheap, builds AI literacy across your team, no vendor lock-in.

Cons: Manual effort at every step, no automation, quality depends entirely on prompt quality, doesn’t scale beyond 3–5 competitors.

When to choose: You’re at Level 1–2 on the maturity model. You want to prove that AI adds value to your CI workflow before spending money. This is where most teams should start.

Option B — Dedicated CI Platform with AI

Purchase Klue, Crayon, or Kompyte. These platforms handle the full stack (collection, processing, analysis, and distribution) with AI features built in. See our complete CI tools guide for detailed reviews.

Pros: Automated collection across hundreds of sources, integrated workflows purpose-built for CI, vendor support and training, established reporting.

Cons: Expensive ($300–$2,500+/month for mid-market; $25,000+/year at enterprise tiers), vendor lock-in, significant learning curve, may include features you’ll never use.

When to choose: You’re at Level 3+ on the maturity model. You’ve already proven CI delivers measurable value. You have executive sponsorship and a real budget. Your team has outgrown manual workflows.

Option C — Hybrid (DIY Foundation + Specialized Tools)

Combine best-in-class point solutions: Visualping for website monitoring, Feedly Pro+ for news aggregation, Claude or GPT for analysis, and Slack for distribution. You get structured competitive intelligence automation without the enterprise platform price tag.

Pros: AI flexibility for analysis with tool-specific automation for collection and distribution. Scales incrementally. No single vendor dependency.

Cons: Requires integration work (connecting tools via n8n, Make, or Zapier). More moving parts to manage. No single dashboard view.

When to choose: You’re at Level 2–3 and want to build toward Level 4 incrementally. Budget: $50–$500/month depending on tools selected.

Example stack: Visualping ($10–$40/month) + Feedly Pro+ ($12/month) + Claude Pro ($20/month) + Slack (free) = $42–$72/month for a functional AI-powered CI program.

Our recommendation: Most mid-market teams should start with Option A. Validate that AI improves your CI outcomes during a 30-day pilot. Then graduate to Option C, adding monitoring and automation tools as specific needs justify them. Move to Option B only when CI is a proven function with executive sponsorship, dedicated headcount, and a budget that makes the ROI math work.

One thing to keep in mind as you progress: “AI by itself is great. It needs data,” says Chieruzzi. “Where enterprise platforms really add value is in providing data connections, both to ingest data and to distribute it out to the team. If competitive intelligence doesn’t reach the sales team, it’s quite useless.” AI can get you to a certain level with the DIY approach, but you’ll eventually hit a wall without structured data connections. For smaller companies, exploring MCP integrations can help bridge that gap — connecting AI tools to your CRM or team platform — before committing to a full enterprise solution.

What AI Can’t Do for Competitive Intelligence

Every vendor article oversells AI’s capabilities. Here’s what they won’t tell you.

“AI sometimes makes you feel like a monkey with a machine gun,” says Chieruzzi. “It makes you feel really powerful, but you need to be in control.”

It’s easy to start overdoing it: building way more than what you need, monitoring too many data sources that your team isn’t able to follow up on, creating maintenance burdens that consume the time you saved. “It’s easy to build with AI, but you still have to do the maintenance, and that part is time-consuming. Just because we could, doesn’t mean we should.”

Hallucination Risk Is Real

Large language models confidently generate plausible-sounding but incorrect competitive data. Ask ChatGPT for a competitor’s current pricing and you’ll often get numbers that are outdated, fabricated, or pulled from an entirely different company with a similar name.

This isn’t a minor issue in CI. Acting on hallucinated competitive data — such as briefing your sales team with AI-generated pricing that’s wrong or building a strategy around a competitor feature that doesn’t exist — damages credibility and produces bad decisions.

Mitigation: Use AI for structure, synthesis, and pattern recognition. Never use it as a primary source of competitive facts. Every AI-generated claim about a competitor must be verified against the original source: their website, their press release, their product documentation.

For teams building more advanced AI CI workflows, there’s a stronger mitigation strategy: multi-agent verification. “You can have multiple agents, one doing the job and another doing a sanity check, verifying the sources, verifying that the assumptions and content of the first CI agent are correct,” Chieruzzi recommends. Build this into your pipeline using no-code platforms like n8n or Make. One agent gathers intelligence, and a second agent validates it before it reaches your team.

“Garbage In, Garbage Out” Still Applies

AI amplifies data quality issues. If your competitive data sources are stale, biased, or incomplete, AI processes those flaws faster and at greater scale. You don’t get better intelligence. You get worse intelligence delivered more efficiently.

CI still requires investment in quality data sources and regular validation of the intelligence pipeline. An AI system monitoring a competitor website that hasn’t been updated in six months produces six months of false confidence.

Relationship Intelligence Remains Human

AI can’t tell you what a competitor’s VP of Sales said at an industry dinner. It can’t read the room at a conference when a competitor’s booth traffic drops noticeably. It doesn’t know what the mood is inside a competitor’s engineering team or what their board discussed in a closed session.

The highest-value competitive intelligence often comes from human networks: conversations at events, industry relationships, customer feedback shared informally, job candidates interviewing at competitors. AI handles what’s published. Humans handle what’s whispered. A mature CI program needs both.

Ethical Boundaries and Legal Risks

AI makes it technically easier to cross ethical lines in competitive intelligence. Scraping protected content, monitoring competitor employees’ personal social media accounts, reverse-engineering proprietary data through prompt engineering: all become more tempting when AI reduces the effort required.

Rule of thumb: If you wouldn’t want a competitor doing it to your company, don’t use AI to do it to theirs.

An emerging risk that few teams have considered: AI agents that autonomously cross ethical lines without intent. “Have guardrails to monitor the work of your agents,” Chieruzzi warns. “Just because you want to respect ethical boundaries doesn’t mean an agent won’t go the extra mile to get the data you need, potentially doing something unethical. Not on purpose, just because it doesn’t fully understand the regulation of the country you’re operating in.” As CI workflows become more automated, baking ethical constraints directly into your prompts and agent configurations becomes non-negotiable.

Legal guardrails to respect:

- Computer Fraud and Abuse Act (CFAA): Unauthorized access to competitor systems is illegal, regardless of whether AI performs the access

- GDPR and privacy regulations: Monitoring individuals (not companies) raises data protection issues, especially in the EU

- Trade secret law: AI-generated intelligence reports can inadvertently incorporate confidential information from training data or scraped sources

- Terms of service: Most websites prohibit automated scraping. Using AI to bypass these restrictions doesn’t make it legal

- Jurisdiction matters: In some countries, scraping publicly available data is acceptable (e.g., the United States). In others, it’s not. Whether data sits behind a login — where you’re accepting terms of service — versus on a public page changes the legal calculus entirely. Always consult with a legal team to understand what’s permissible in the countries where you operate.

Strategic Judgment Is Irreplaceable

AI identifies patterns. Humans make judgment calls about what those patterns mean and what to do about them.

“Competitor X lowered pricing 15%” is a data point any AI tool can surface. “They’re preparing for a market-share land grab before their Series C” is strategic judgment that requires understanding the competitor’s funding trajectory, board pressure, market timing, and broader industry dynamics. AI delivers the data. Your CI team provides the interpretation that turns data into a competitive advantage.

The Future of AI Competitive Intelligence

These aren’t speculative predictions. They’re capabilities emerging now, with early evidence to support their trajectory.

AI agents for CI. Autonomous agents that monitor, analyze, and deliver competitive intelligence without human initiation are moving from concept to reality. Crayon’s launch of the first competitive intelligence MCP server in September 2025, enabling AI tools like Claude, ChatGPT, and Glean to access CI data through a standardized protocol, is the clearest signal that agent-based CI is arriving. Within 12–18 months, expect CI agents that build competitive dashboards and surface intelligence through conversational interfaces.

MCP matters for CI specifically because, as Chieruzzi puts it, “CI is a lot about moving data in and out, and MCP is going to make the technical infrastructure way easier.” CRMs like HubSpot are already releasing their own MCP servers, connecting the ecosystem that CI depends on for both data ingestion and intelligence distribution.

Multimodal CI. AI analyzing competitor video content, product demos, conference presentations, and podcast appearances — not just text. Multimodal large language models can already process video and audio. The application to CI is straightforward: analyze a competitor’s product demo for feature changes, or track messaging shifts in their CEO’s keynote presentations. Practical adoption is 12–24 months out for most teams.

Predictive CI. Moving from reactive intelligence (“what did competitors do?”) to proactive intelligence (“what will competitors do next?”). AI identifies patterns in hiring data, patent filings, tech stack changes, and social signals that correlate with specific strategic moves. Still early, but the shift from “alerting on what happened” to “forecasting what’s coming” is the next frontier.

Real-time CI in deal flow. AI surfacing relevant competitive intelligence inside CRM during active deals, triggered by deal stage, competitor mentioned, or objection logged. This capability exists in enterprise CI platforms today. It will become accessible to mid-market teams within the next year.

Temper expectations. Most of these capabilities are 12–24 months from mainstream adoption for mid-market teams. Don’t wait for them. The practical focus for 2026 is getting Levels 2–4 on the maturity model right. The competitive advantage isn’t next-generation AI. It’s implementing what works today while your competitors are still debating whether to start.

Frequently Asked Questions

What is AI-powered competitive intelligence?

AI-powered competitive intelligence uses artificial intelligence — including large language models, natural language processing, and machine learning — to automate and improve the process of gathering, analyzing, and distributing information about competitors. It spans the full CI workflow from automated monitoring to AI-generated battlecards to predictive analysis of competitor moves.

How is AI transforming competitive intelligence?

AI changes CI across seven areas: automated competitor monitoring, real-time battlecard generation, win/loss pattern analysis, pricing intelligence, content and messaging tracking, market sentiment analysis, and predictive intelligence. The biggest impact is speed and coverage. Tasks that took 10+ hours per week now take 1–2 hours of review, and teams can monitor 100+ sources per competitor instead of 5–10.

Can AI replace competitive intelligence analysts?

No. AI handles data collection, pattern recognition, and first-draft generation — the speed and scale tasks. Human analysts remain essential for strategic judgment, relationship intelligence (what’s happening behind closed doors), contextual interpretation, and ethical oversight. The most effective model is AI augmentation: AI doing the heavy lifting while humans provide the strategic thinking. Companies that try to fully automate CI without human oversight produce more intelligence faster, but not better intelligence.

What are the best AI tools for competitive intelligence?

The best AI for competitor analysis depends on your budget and maturity level. For dedicated platforms: Klue, Crayon, and Kompyte offer end-to-end AI-powered CI. For DIY: ChatGPT and Claude are effective for analysis and synthesis at $0–20/month. For monitoring: Visualping and Feedly Pro+ with AI filtering. For the hybrid approach most mid-market teams should pursue: combine Visualping + Feedly + Claude + Slack for $50–100/month. See our complete CI tools guide for detailed reviews of each platform.

How much does an AI-powered CI program cost?

Three tiers: DIY using ChatGPT or Claude costs $0–$50/month. Hybrid stack combining monitoring tools with general AI costs $50–$500/month. Dedicated CI platforms run $300–$30,000+/month depending on team size and features. Most mid-market teams (50–500 employees) can build a functional AI-powered CI program for under $200/month using the hybrid approach. The investment typically pays for itself within 90 days through time savings alone.

What are the risks of using AI for competitive intelligence?

Four primary risks: (1) Hallucination, where AI generates plausible but incorrect competitive data — dangerous when used to brief sales teams. (2) Data quality amplification, where bad inputs produce worse outputs at faster speed. (3) Ethical boundary violations, where AI makes it easier to cross lines into over-monitoring competitor employees or scraping protected content, and AI agents may autonomously cross ethical boundaries without intent. (4) Over-reliance, where teams that trust AI output without human verification make worse decisions, not better ones. Mitigation: always verify AI-generated intelligence against primary sources, and consider multi-agent verification pipelines for automated workflows.

How do AI agents work for competitive intelligence?

AI agents are autonomous systems that monitor competitor activity, analyze changes, and deliver insights without human initiation. They surface intelligence on their own rather than waiting to be asked. In practice, an AI agent might continuously scan competitor websites, detect a pricing change, analyze its implications, update relevant battlecards, and notify the sales team — all without manual intervention. Crayon’s MCP server (launched September 2025) is the first CI-specific implementation, enabling AI tools to access competitive intelligence through a standardized protocol. Agent-based CI represents the shift from “tools you query” to “systems that work for you.”

Start Building Your AI-Powered CI Program Today

The top Google result for “AI competitive intelligence” is a Reddit thread. Everyone’s asking questions, and very few teams are implementing answers.

You now have both the frameworks and the practical steps to close that gap. Use the maturity model to assess where your team stands today. Pick one high-ROI use case — automated monitoring or AI-assisted battlecard drafting — and run a 30-day pilot. Start with the DIY approach to validate that AI improves your CI outcomes before investing in tools.

The competitive advantage in 2026 isn’t AI itself. Every team will eventually adopt AI for CI, and the CI tools market is growing at nearly 20% annually. The advantage belongs to teams that implement AI-powered CI now, while competitors are still reading Reddit threads about whether they should start.

Next steps:

- New to competitive intelligence? Start with our guide to competitive intelligence fundamentals.

- Ready to choose tools? See our 2026 CI tools roundup for vendor-neutral platform reviews.

- Building battlecards? Our sales battle cards guide includes templates you can use today.

Subscribe to the Unkover newsletter for weekly competitive intelligence strategies, tool reviews, and frameworks — written for practitioners, not vendors.

![Top 5 Market Intelligence Tools for GTM Teams [2025]](https://unkover.com/wp-content/uploads/2024/05/unkover-illust-whiteHat-01-300x158.webp)